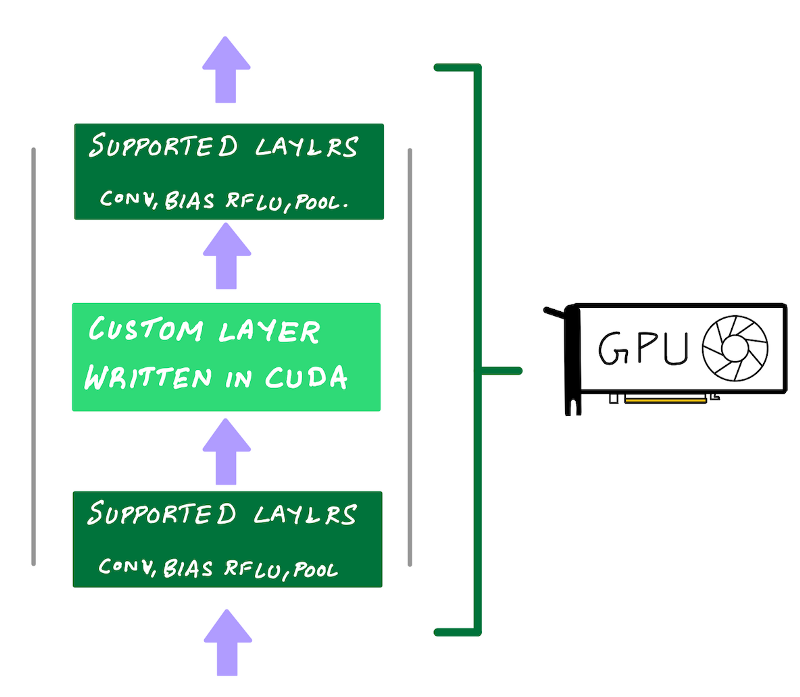

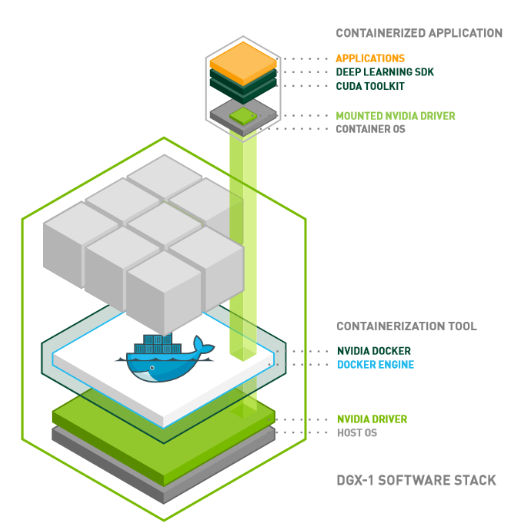

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

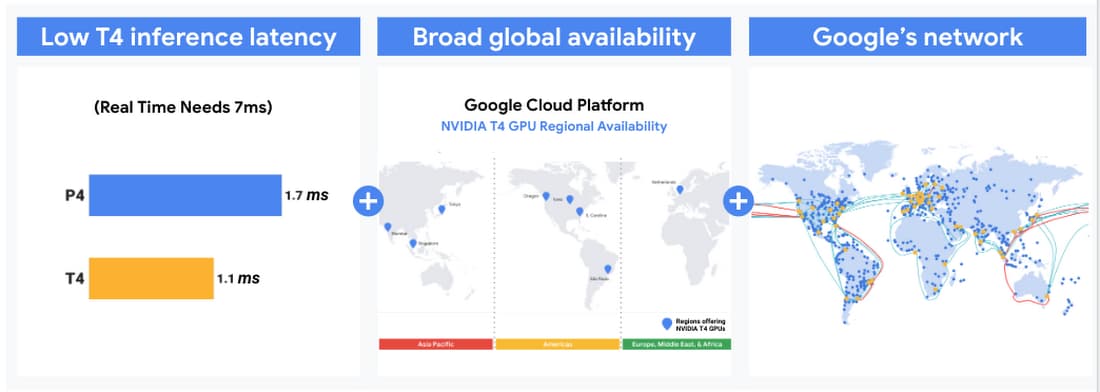

Efficiently scale ML and other compute workloads on NVIDIA's T4 GPU, now generally available | Google Cloud Blog

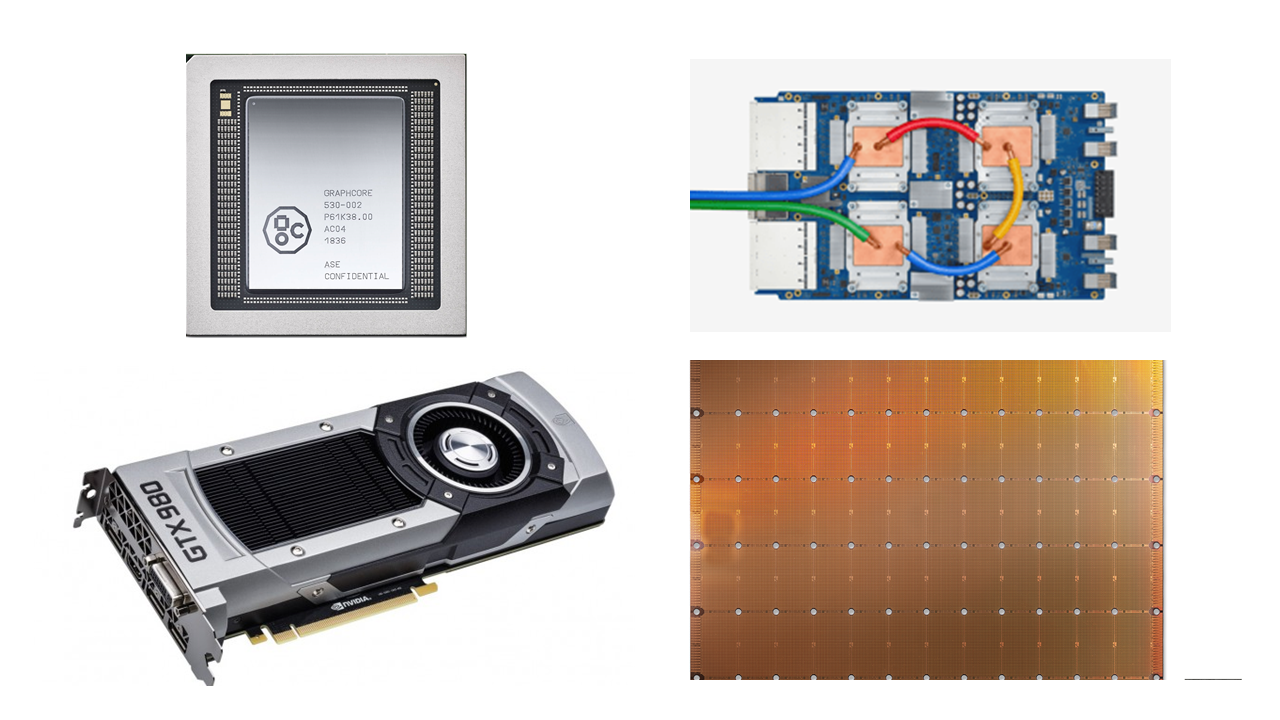

TPU vs GPU vs Cerebras vs Graphcore: A Fair Comparison between ML Hardware | by Mahmoud Khairy | Medium

![Best GPUs for Deep Learning (Machine Learning) 2021 [GUIDE] Best GPUs for Deep Learning (Machine Learning) 2021 [GUIDE]](https://i1.wp.com/saitechincorporated.com/wp-content/uploads/2021/06/maxresdefault.jpg?resize=580%2C326&ssl=1)